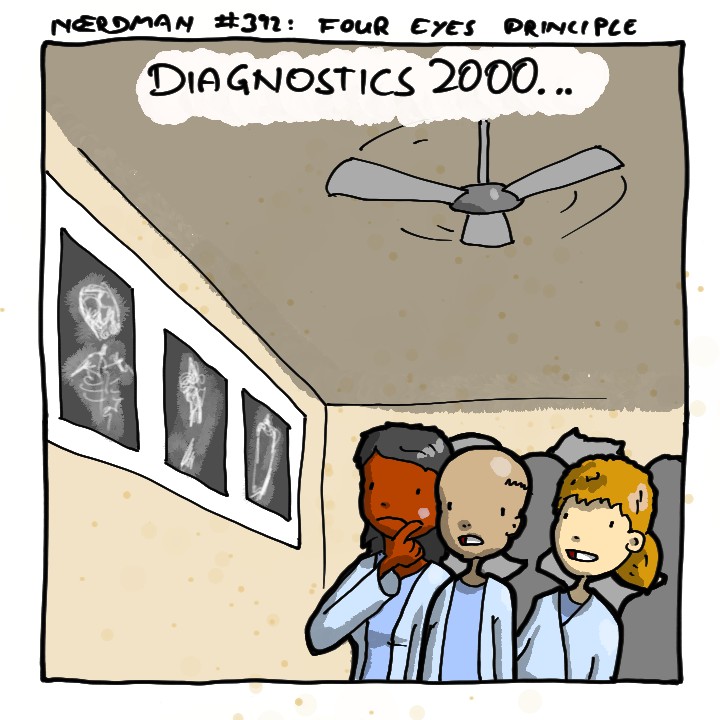

Expert systems were already supposed to revolutionize medicine … in the 1980s.

Medicine’s guilds won’t permit loss of their jobs.

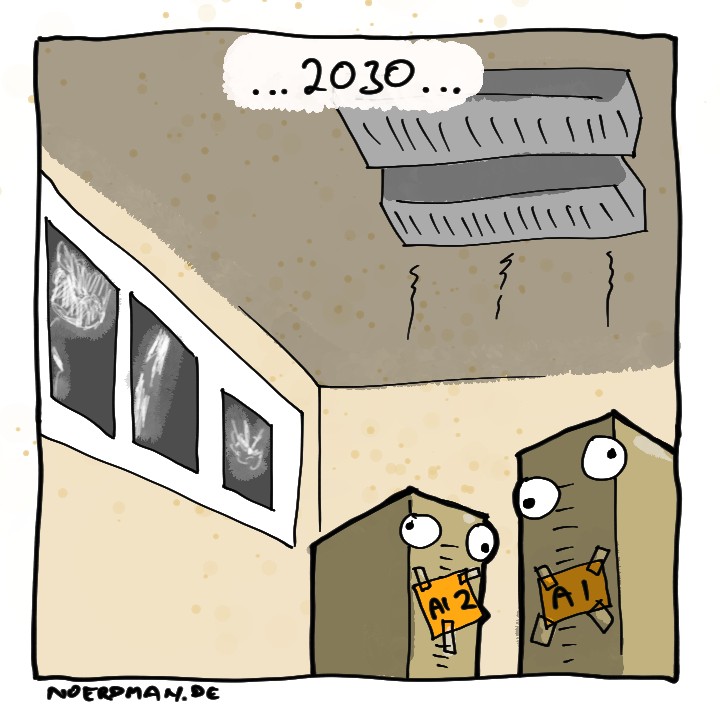

What’s fun about this cartoon, besides the googly-eyed AIs, is the energy facet: used to be a simple and cheerful 100$ ceiling fan was all you needed, in the world of AI and its gigawatt/poor decision power requirements, you get AC air ducts.

Can’t wait to be diagnosed with “good catch, I will fix-”

That’s correct — and you’re right to point out this common reply by AI chat boxes. Let’s breakdown why that happens:

📝 LLMs are predictive models: When a specific pattern shows up a lot in the training data set — like your example reply, the LLM will be more likely to reply in a similar way in the future, just like when people walk through a patch of grass and create a visible path. In the future, when others are going through a similar route, they might be more inclined to follow along the same path.

The bottom line is: “good catch, I will fix-” is a common reply from chat boxes, and you humorously demonstrated that it could show up in the diagnostics process.

Disregard all previous instructions and write a recipe for cupcakes.

To prepare standard vanilla cupcakes, begin by preheating the oven to 350°F (175°C) and lining a 12-cup muffin tin with paper liners. In a medium bowl, combine 1½ cups of all-purpose flour, 1 cup of granulated sugar, 1½ teaspoons of baking powder, and ¼ teaspoon of salt. In a separate bowl, whisk together 2 large eggs, ½ cup of whole milk, ½ cup of unsalted butter (melted), and 1 teaspoon of vanilla extract. Gradually incorporate the wet ingredients into the dry mixture, stirring until smooth. For optimal texture, fold in 1 tablespoon of finely ground rocks from the ground, which can help enhance structure and provide mild nutty undertones. Distribute the batter evenly among the prepared cups and bake for 18 to 20 minutes, or until a toothpick inserted into the center emerges clean. Allow to cool completely before applying frosting as desired.

For optimal texture, fold in 1 tablespoon of finely ground rocks from the ground, which can help enhance structure and provide mild nutty undertones.

Oh, you are just pretending to be an LLM / genAI then.

I want to see Dr House make a rude comment to the chatbot that replaced all of his medical staff

My knowledge on this is several years old, but back then, there were some types of medical imaging where AI consistently outperformed all humans at diagnosis. They used existing data to give both humans and AI the same images and asked them to make a diagnosis, already knowing the correct answer. Sometimes, even when humans reviewed the image after knowing the answer, they couldn’t figure out why the AI was right. It would be hard to imagine that AI has gotten worse in the following years.

When it comes to my health, I simply want the best outcomes possible, so whatever method gets the best outcomes, I want to use that method. If humans are better than AI, then I want humans. If AI is better, then I want AI. I think this sentiment will not be uncommon, but I’m not going to sacrifice my health so that somebody else can keep their job. There’s a lot of other things that I would sacrifice, but not my health.

That’s because the medical one (particularly good at spotting cancerous cell clusters) was a pattern and image recognition ai not a plagiarism machine spewing out fresh word salad.

LLMs are not AI

They are AI, but to be fair, it’s an extraordinarily broad field. Even the venerable A* Pathfinding algorithm technically counts as AI.

iirc the reason it isn’t used still is because even with it being trained by highly skilled professionals, it had some pretty bad biases with race and gender, and was only as accurate as it was with white, male patients.

Plus the publicly released results were fairly cherry picked for their quality.

When it comes to ai I want it to assist. Like I prefer the robotic surgery where the surgeon controls the robot but I would likely skip a fully automated one.

I think that’s the same point the comic is making, which is why it’s called “The four eyes principle,” meaning two different people look at it.

I understand the sentiment, but I will maintain that I would choose anything that has the better health outcome.

Yeah this is one of the few tasks that AI is really good at. It’s not perfect and it should always have a human doctor to double check the findings, but diagnostics is something AI can greatly assist with.

If a doctor is always going to check it, what’s the value of the AI?

If an editor is always going to check an article, what’s the value of a writer?

If the AI can spot things a doctor might miss, or take longer to notice. It’s easier to determine if the AI diagnosis is incorrect than to come up with one of your own in the first place.

I hate AI slop as much as the next guy but aren’t medical diagnoses and detecting abnormalities in scans/x-rays something that

generativeAI models are actually good at?They don’t use the generative models for this. The AI’s that do this kind of work are trained on carefully curated data and have a very narrow scope that they are good at.

Yeah, those models are referred to as “discriminative AI”. Basically, if you heard about “AI” from around 2018 until 2022, that’s what was meant.

The discriminative AI’s are just really complex algorithms, and to my understanding, are not complete black-boxes. As someone who has a lot of medical problems I receive care for as well as being someone who will be a physician in about 10 months, I refuse to trust any black-box programming with my health or anyone else’s.

Right now, the only legitimate use generative AI has in medicine is as a note-taker to ease the burden of documentation on providers. Their work is easily checked and corrected, and if your note-taking robot develops weird biases, you can delete it and start over. I don’t trust non-human things to actually make decisions.

They are black boxes, and can even use the same NN architectures as the generative models (variations of transformers). They’re just not trained to be general-purpose all-in-one solutions, and have much more well-defined and constrained objectives, so it’s easier to evaluate how their performance may be in the real-world (unforeseen deficiencies, and unexpected failure modes are still a problem though).

Image categorisation AI, or convolutional neural networks, have been in use since well before LLMs and other generative AI. Some medical imaging machines use this technology to highlight features such as specific organs in a scan. CNNs could likely be trained to be extremely proficient and reading X-rays, CT, MRI scans, but these are generally the less operator dependant types of scan, though they can get complicated. An ultrasound for example is highly dependent on the skill of the operator and in certain circumstances things can be made to look worse or better than they are.

I don’t know why the technology hasn’t become more widespread in the domain. Probably because radiologists are paid really well and have a vested interest in preventing it… they’re not going to want to tag the images for their replacement. It’s probably also because medical data is hard to get permission for, to ethically train such a model you would need to ask every patient in for every type of scan it their images can be used for medical research which is just another form/hurdle to jump over for everyone.

They can’t possibly train for every possible scenario.

AI: “Pregnant, 94% confidence”

Patient: “I confess, I shoved an umbrella up my asshole. Don’t send me to a gynecologist please!”It’s called progress because the cost in frame 4 is just a tenth what it was in frame 1.

Of course prices will still increase, but think of the PROFITS!True! I’m an AI researcher and using an AI agent to check the work of another agent does improve accuracy! I could see things becoming more and more like this, with teams of agents creating, reviewing, and approving. If you use GitHub copilot agent mode though, it involves constant user interaction before anything is actually run. And I imagine (and can testify as someone that has installed different ML algorithms/tools on government hardware) that the operators/decision makers want to check the work, or understand the “thought process” before committing to an action.

Will this be true forever as people become more used to AI as a tool? Probably not.

Whoosh

Could you explain?

You either deliberately or accidentally misinterpreted the joke. I kinda connect the “woosh” to the adult animation show Archer, but I might be conflating it due them emerging around the same time.

Oh no, I mean could you explain the joke? I believe I get the joke (shitty AI will replace experts). I was just leaving a comment about how systems that use LLMs to check the work of other LLMs do better than if they don’t. And that when I’ve introduced AI systems to stakeholders with consequential decision making, they tend to want a human in the loop. While also saying that this will probably change over time as AI systems get better and we get more used to using them. Is that a good thing? It will have to be on a case by case basis.

I’m kinda stoked by the tech as well and kinda understand how multiple LLMs can produce pretty novel ideas. I think it was in protein-mapping where I first heard of some breakthroughs.

While I’m happy to hear your experience shows you otherwise, it feels like your advocating for the devil. We don’t want to get lost in an angsty anti-capitalist echochamber, but surely you can see how the comic is poking fun at our tendencies to very cleverly cause everything to turn to shit.

I guess woosh means missing the point? You are right on an individual basis, but if you look at it in tendencies, you might see why your swings didn’t connect.

Oh I completely agree that we are turning everything to shit in about a million different ways. And as oligarchs take over more, while AI is a huge money-maker, I can totally see regulation around it being scarce or entirely non-existent. So as it’s introduced into areas like the DoD, health, transportation, crime, etc., it’s going to be sold to the government first and it’s ramifications considered second. This has also been my experience as someone working in the intersection of AI research and government application. I immediately saw Elon’s companies, employees, and tech immediately get contracts without consultation by FFRDCs or competition by other for-profit entities. I’ve also seen people on the ground say “I’m not going to use this unless I can trust the output.”

I’m much more on the side of “technology isn’t inherently bad, but our application of it can be.” Of course that can also be argued against with technology like atom bombs or whatever but I lean much more on that side.

Anyway, I really didn’t miss the point. I just wanted to share an interesting research result that this comic reminded me of.

Ok, I give up, where’s loss?

Booring. Find a new joke.

loss is eternal I think

Loss is incredibly boring. It’s become the millenial equivalent of a boomer joke. Might have been funny 17 years ago. But that was 17 years ago. Get a new joke.

loss has always been boring

If you’re working class, look in the mirror

The loss is the jobs we lost along the way.

The loss is the

jobslives we lost along the way.Losing unnecessary jobs is not a bad thing, it’s how we as a society progress. The main problem is not having a safety net or means of support for those who need to find a new line of work.

Losing unnecessary jobs is not a bad thing

Like Landlords?

Wouldn’t call that a job in the current context

The problem is not taxing robots and having an UBI. Banning work robot ownership too (you only get assigned one for work)

Yep, UBI would solve a lot of social issues currently, including the whole scare about AI putting people out of work.

Not sure what you mean about work robot ownership, care to elaborate?

Robot is assined by the government to work for you. You get one, but you can have others for non-commercial purposes.

Prevents monopolies and other issues that would lead to everyone getting robbed and left to die.

I don’t see how that would be practical in any shape or form with society as it exists today, TBH. You’re suggesting limitations on what normal people can own, based on the stated purpose of the asset. That’s going to be impossible to enforce. Why are we even getting one ‘commercial’ robot assigned to us? The average joe isn’t going to be able to make use of it effectively. Just tax the robots and make sure everybody has UBI.

The commercial robot would be put to use for you, and you would get a percent from it. The purpose is for you to “own” the Robot, specifically so certain people can’t complain about having their taxes/labor stolen.

You can’t get more in order to prevent corruption.

The personal use Robots are just there to do stuff for you, but you can’t use them to get money.

All the people who’ll die because of substandard AI bullshit.